Rain Symphony

A generative audiovisual experience where raindrops compose evolving musical patterns

一场生成式视听体验,雨滴在画布上谱写不断演化的音乐图案

Rain Symphony is an immersive, generative audiovisual experience designed to blur the line between natural phenomena and digital art — where each raindrop becomes a note in an ever-changing composition.

Rain Symphony 是一场沉浸式的生成视听体验,旨在模糊自然现象与数字艺术之间的界限—— 每一滴雨都成为一首不断变化的乐曲中的音符。

Live Demo

Watch the raindrops fall and create rippling patterns. Each drop triggers a unique visual and sonic response.

观看雨滴落下并产生涟漪图案。每一滴都会触发独特的视觉与声音反馈。

Project Overview

The project explores the relationship between visual and aural perception, and the harmonious interplay between randomness and structure in generative systems. Inspired by the meditative quality of rain, Rain Symphony uses rainfall through digital simulation to create an evolving, meditative audiovisual experience.

Each raindrop is governed by physics-based rules — gravity, velocity, and collision detection — while also serving as a trigger for generative sound. The result is a piece that feels both organic and algorithmic, natural and synthetic.

Technical Development

Particle System

Each raindrop is an autonomous particle with physics properties including mass, velocity, and acceleration. Gravity pulls them downward while slight horizontal variations create natural-looking trajectories.

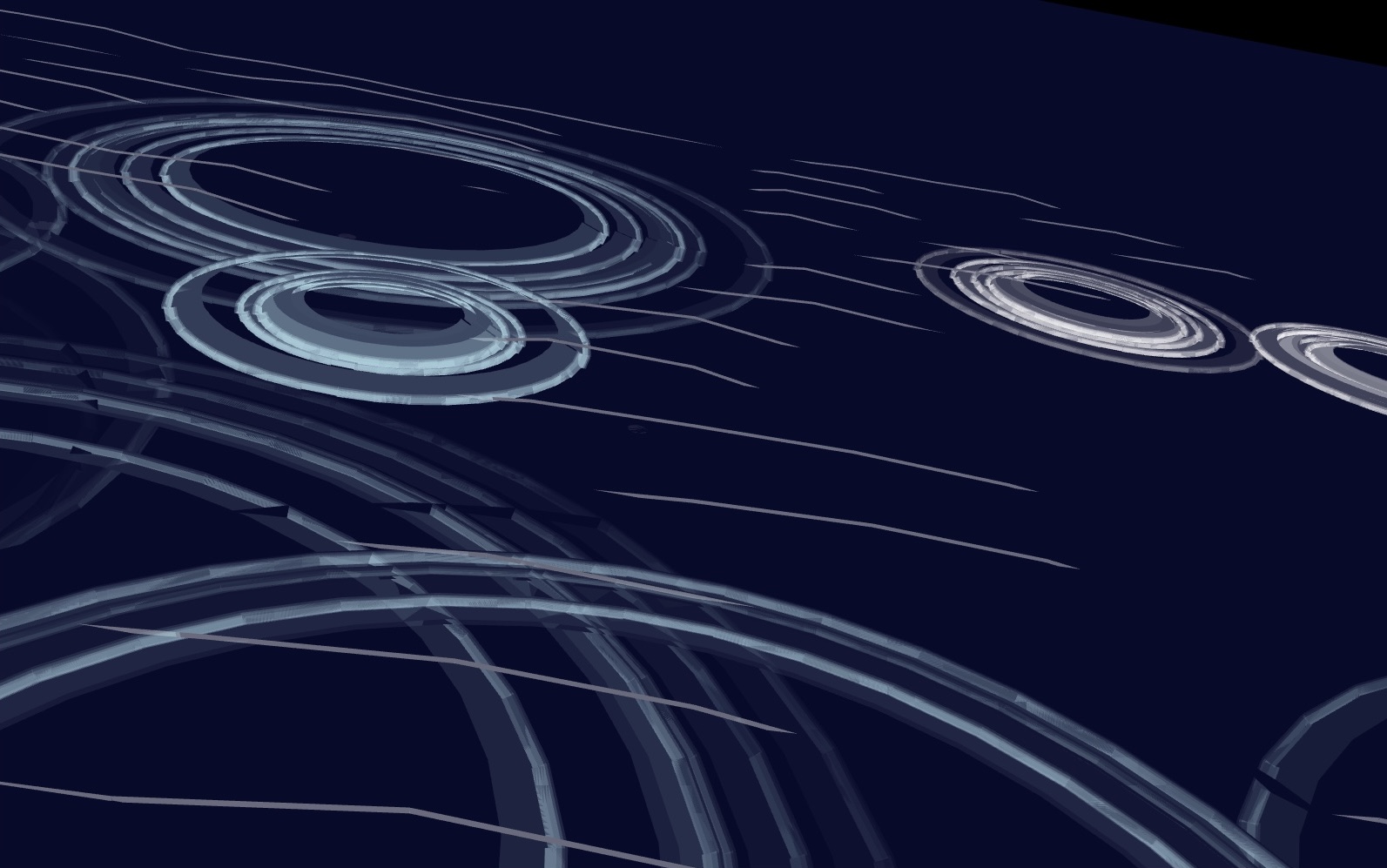

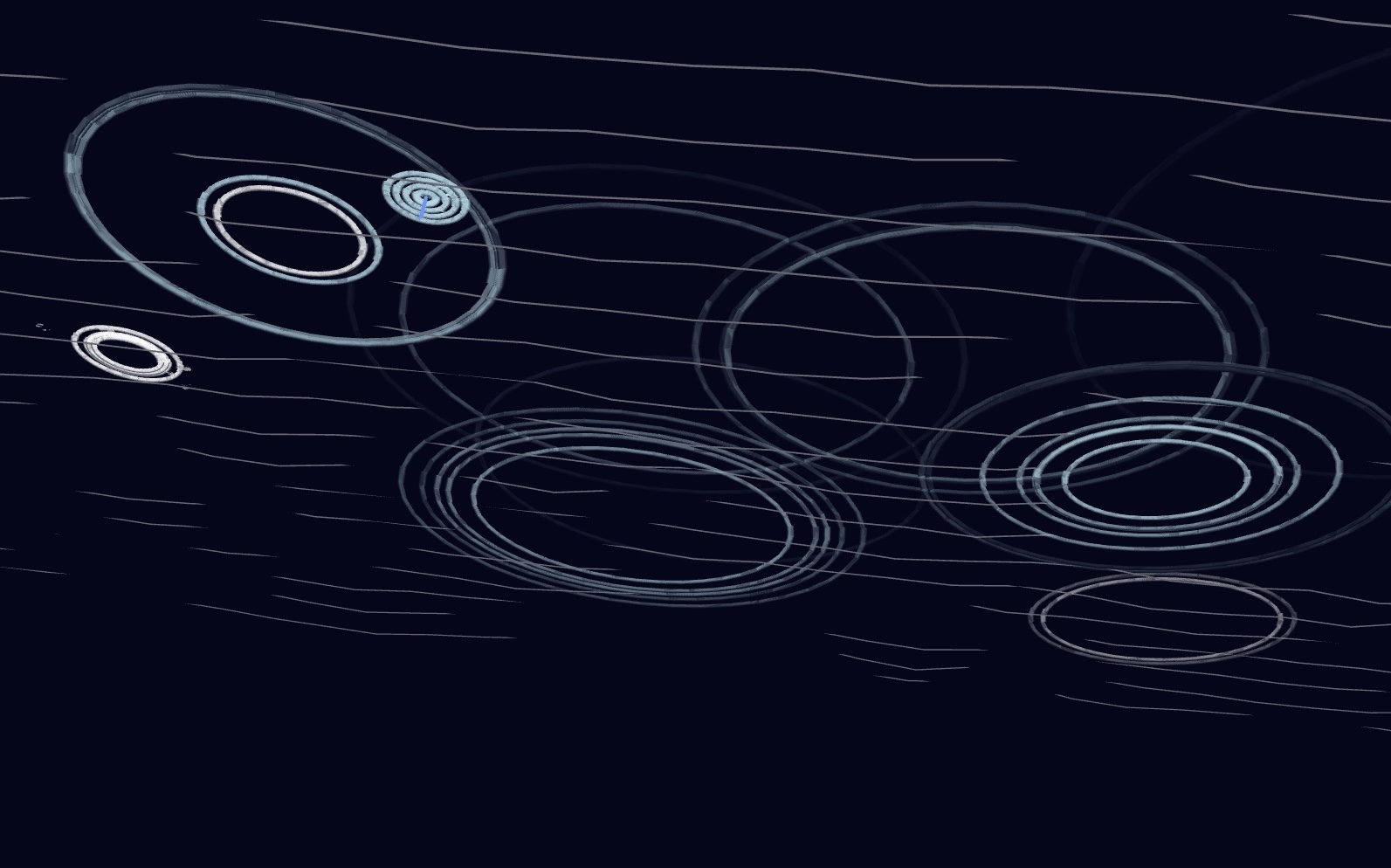

Ripple Dynamics

When raindrops hit the surface, they spawn expanding circular ripples with decreasing opacity. Multiple ripples interact and overlap, creating complex interference patterns.

Audio Integration

Collision events trigger synthesized sounds using p5.sound. Pitch varies based on drop size and position, creating a generative soundscape that mirrors the visual rainfall.

Flow Field

A Perlin noise-based flow field subtly influences particle movement, adding organic variation to the rain's descent and preventing purely vertical, mechanical-looking motion.

Aesthetic Implementation

Visual Harmony

The color palette is deliberately minimal — deep navy backgrounds with soft cyan and white particles. This creates a nocturnal, contemplative atmosphere that emphasizes the luminosity of each raindrop. Transparency and additive blending modes enhance the ethereal quality, with overlapping elements creating subtle color interactions.

Audio-Visual Harmony

Sound and image are tightly coupled: the visual intensity of ripples corresponds to audio amplitude, while color temperature shifts with pitch. This synaesthetic approach creates a unified sensory experience where seeing and hearing become intertwined.

Reflections & Lessons Learned

This project was a meaningful exploration of how code can evoke emotional and sensory experiences. The challenge of synchronizing visual and audio elements reinforced the importance of careful timing and parameter tuning in generative systems.

Working with physics simulation taught me that small changes in numerical parameters can dramatically affect the "feel" of a piece — the difference between rain that feels heavy and oppressive versus light and refreshing often came down to subtle adjustments in gravity and damping values.

Looking forward, I'm interested in exploring more complex mappings between visual and audio parameters, potentially incorporating machine learning to create more sophisticated generative compositions.

Built With

- p5.js

- p5.sound

- Perlin Noise

- Particle Physics

- Generative Audio